Is migrating a wordpress site to a different architecture setup worth it?

This post compares an old architecture to a new architecture. The new architecture is uses a different web server and php gateway.

Old architecture: Apache and mod_php

New architecture: Nginx and php-fpm

Below I run a web page speed test and a load test again the sites.

This is not going to be a perfect like for like test – there are difference workloads, being accessed at different times with different configuration. The theme and plugins on the servers are different but the database has the same content.

The Instances

Instance 1 (number1.co.za):

- Debian 9.13

- Apache Apache/2.4.25

- PHP Version 7.3.19

- Server API: Apache 2.0 Handler

- In Amsterdam Datacenter

- 2GB memory

- CPU(s): 1

- Thread(s) per core: 1

- Core(s) per socket: 1

- Socket(s): 1

- Virtualization: VT-x

- Hypervisor vendor: KVM

- Virtualization type: full

- L1i cache: 32K

- L2 cache: 4096K

- CPU MHz: 2294.608

- stepping: 2

Instance 2 (number1.fixes.co.za):

- Ubuntu 20.04

- nginx/1.20.1

- PHP Version 7.4.3

- Server API: FPM/FastCGI

- In Johannesburg Datacenter (Teraco Isando)

- 1GB memory

- Architecture: x86_64

- CPU(s): 1

- Thread(s) per core: 1

- Core(s) per socket: 1

- Socket(s): 1

- Hypervisor vendor: KVM

- Virtualization type: full

- L1i cache: 32 KiB

- L2 cache: 4 MiB

- CPU MHz: 2499.998

- stepping 1

Online Page speed tests

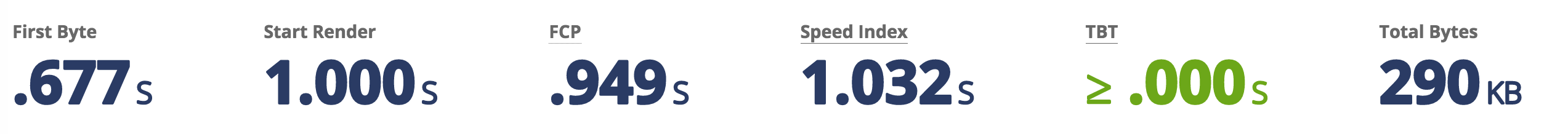

Instance 1 (Number1.co.za):

The apache instance performed well.

- 0.884s to first byte

- 81% performance

- 1.6s TTFB (pagespeed insights)

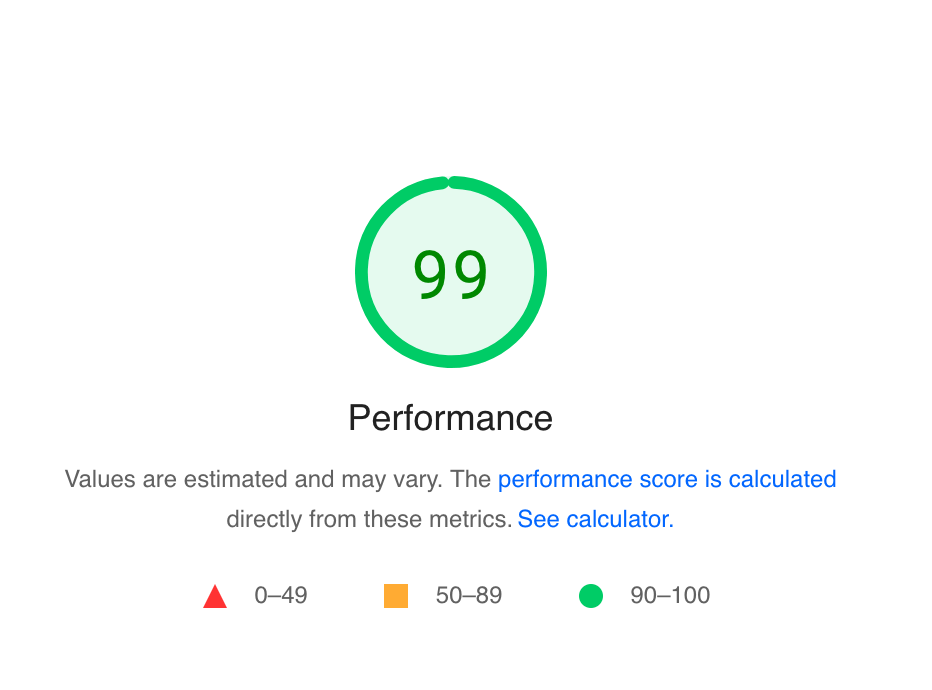

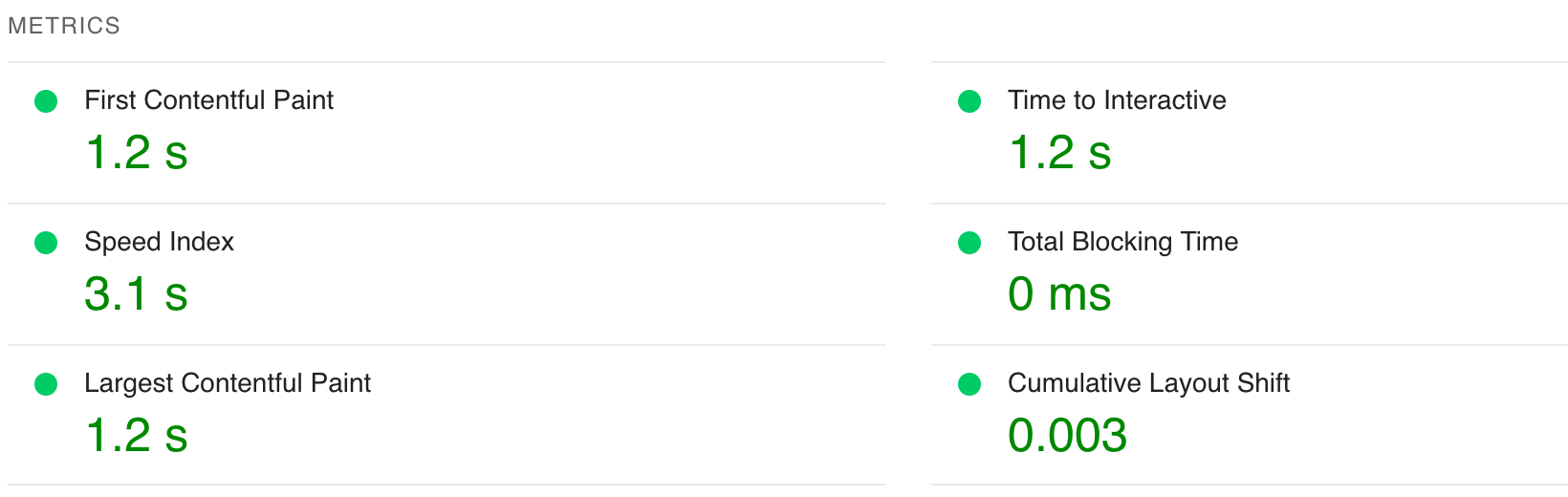

Instance 2 (Number1.fixes.co.za)

The nginx instance performed better than the apache.

- 0.667s to first byte > 0.884s

- 99% performance > 81% performance

Under Load

How do the websites fair under load?

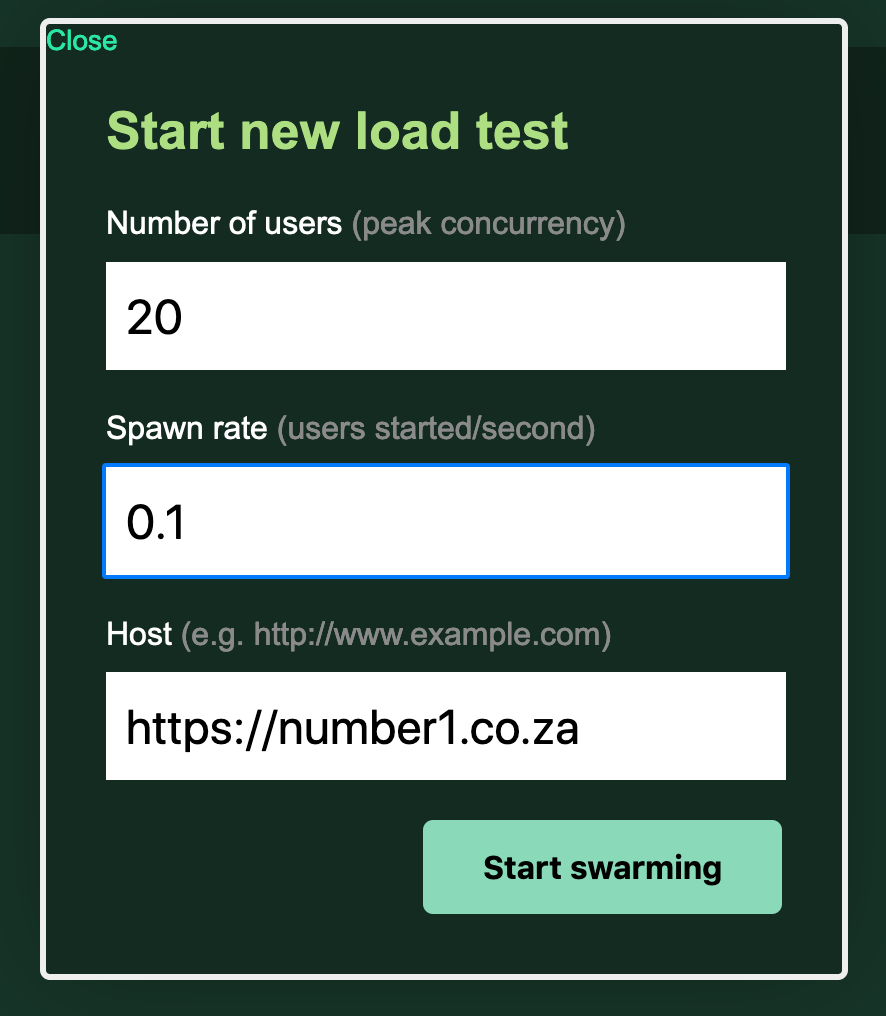

I will be using python locustio to mimic 20 active users on the site – using the sitemap paths for 5 minutes.

I will also inspect top to see the processes (workers) and usage of the machines.

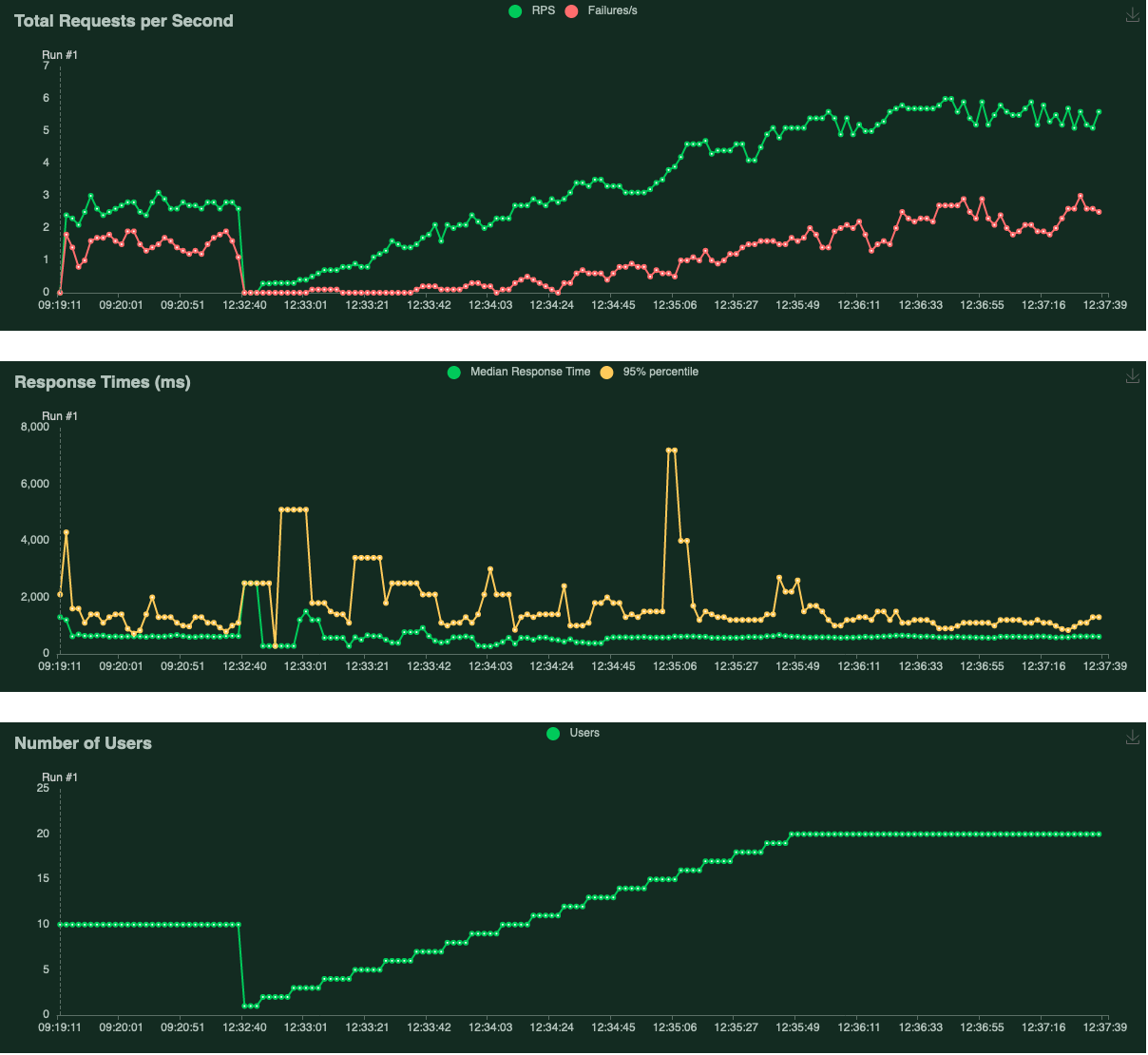

Instance 1 (Number1.co.za):

- 1121 requests

- 344 failures

- median response time: 590 ms

- average response time: 691 ms

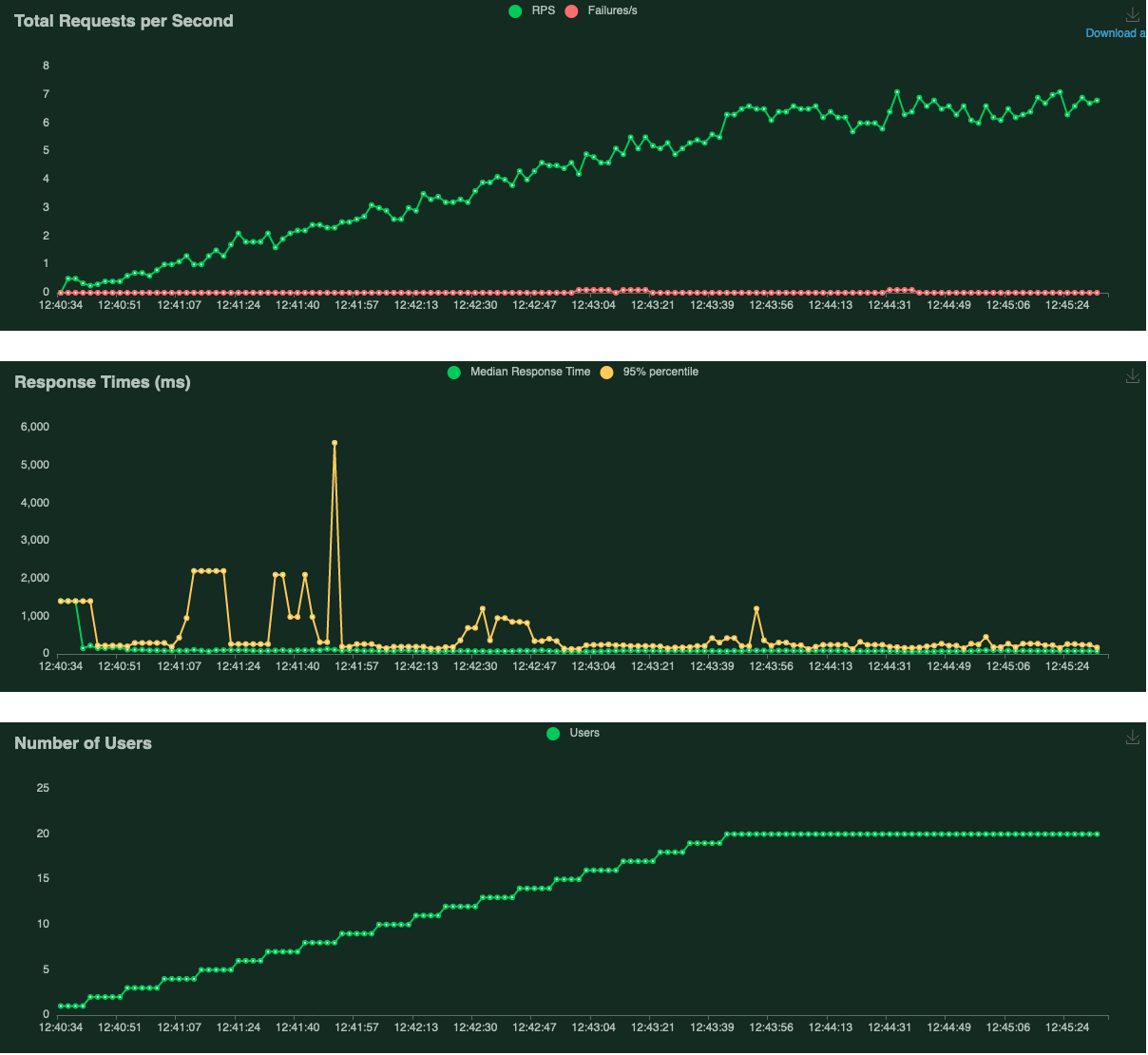

Instance 2 (Number1.fixes.co.za)

- 1327 requests

- 3 failures

- median response time: 79 ms

- average response time: 126 ms

Summary

First off, 160 – 200 ms of latency comes from – the geographic distance between my testing venue and the servers.

The Amsterdam server will be above 180ms more.

Perhaps using ab on the servers themselves (so the requests would send locally) would have been a better testing method.

The most concerning thing was the climbing of errors in instance 1 when the Requests per second increased. They were 404 errors. I wonder why?

The response time from the nginx instance was about 300 to 400 ms faster (accounting for the latency differences).

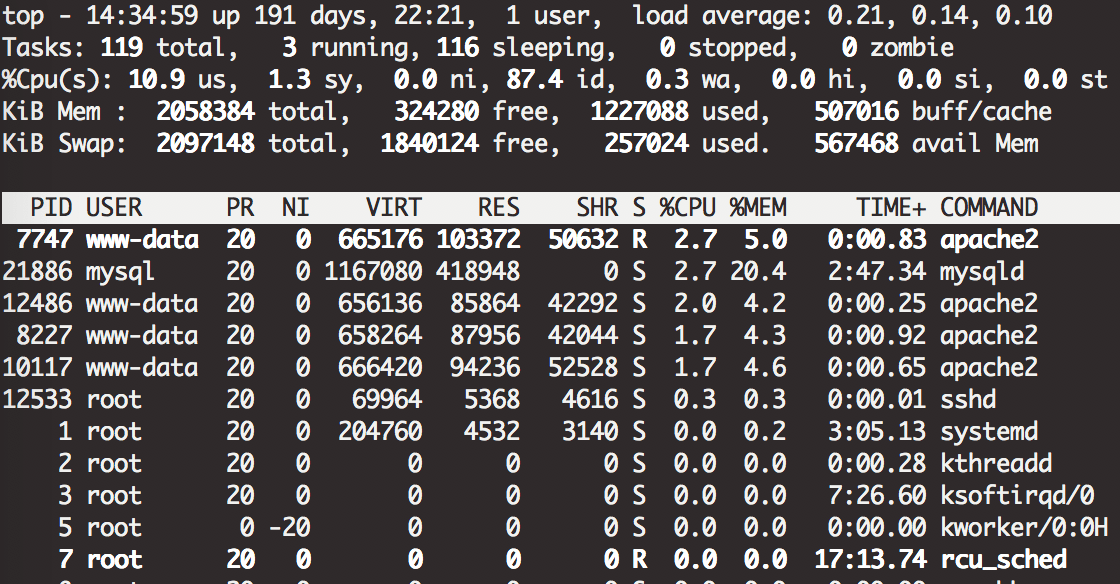

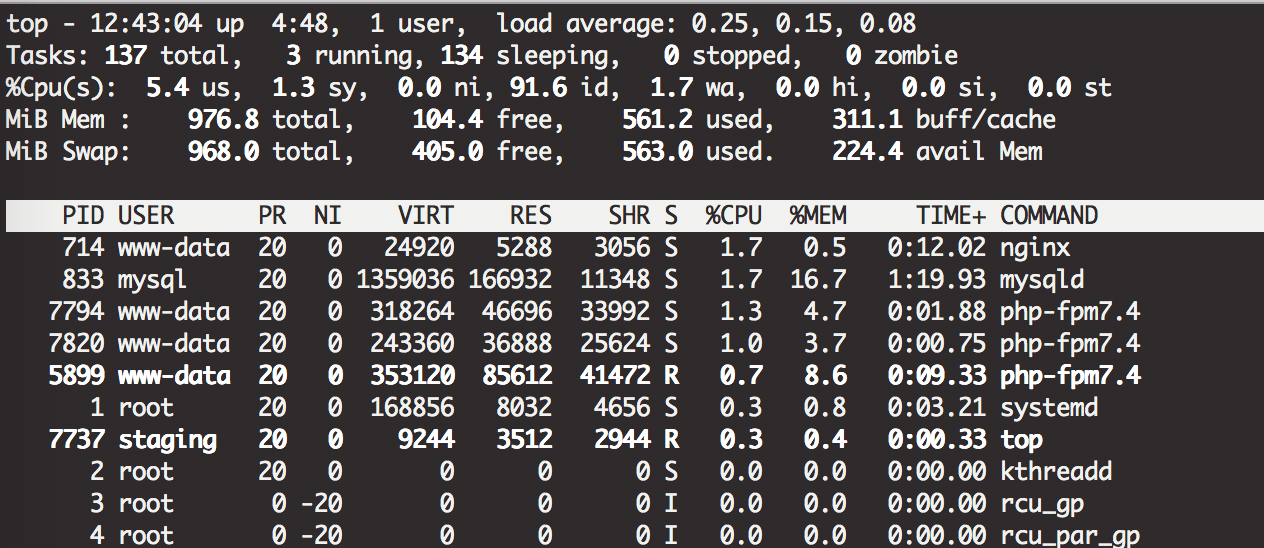

Both seemed to only have 3-4 processes running – serving requests. The cpu usage did not spike – only 10% usage on Instance 1 and 5% on instance 2.

Usually the default settings are configured optimally but it is worth researching how many processes (workers) to set as a max to get the most out of your cpu and allow for a greater degree of concurrency before running into problems.

How many processes does apache spawn and how many does php-fpm for each request? How much memory and CPU does each process use?

You can check your php-fpm.log for errors like:

[16-Jun-2022 21:11:37] WARNING: [pool www] server reached pm.max_children setting (5), consider raising itTideways has a good article on php tuning that is beyond the scope of what we are looking at…but it decent further reading.

Conclusion

It is worth switching over to Nginx and PHP-FPM – due to the performance improvements.